The benefits of standardizing benchmarking and key datasets are three-fold: Following the “AI Winter” of the 1980s, where “government funders sought to more accurately assess the value received on grant,” popular and widely used benchmark datasets gave ML projects a way to “formalize a particular task through a dataset and an associated quantitative metric of evaluation.” Similarly, the number of institutions most responsible for producing the current crop of BMDs have become less diverse (from roughly 0.65 – 0.83 over 2015 – 2020), and in 2021 include only 12: UC Stanford, Microsoft, Princeton, Max Planck, Google, Chinese University of Hong Kong, AT&T, Toyota Technical Institute at Chicago, New York University, Georgia Tech, UC Berkley, and Facebook.Īccording to the paper’s authors, the AI/ML community’s push toward standardization was a result of a need for easy agreement on universal metrics of progress, making it easier to push the technology forward. This increase comes at the exclusion of datasets that may be specialized or better suited for testing certain models but are less well-known and regarded, reducing incentives to use them, and naturally strengthening the BMDs’ influence and the institutions that created them.

Using the Gini coefficient, a metric traditionally used to measure inequality between nation states, the authors’ findings were that from 2015 – 2020, year-over-year use of these popular datasets increased from roughly 0.6 – 0.75, where higher values imply a less diverse spread.

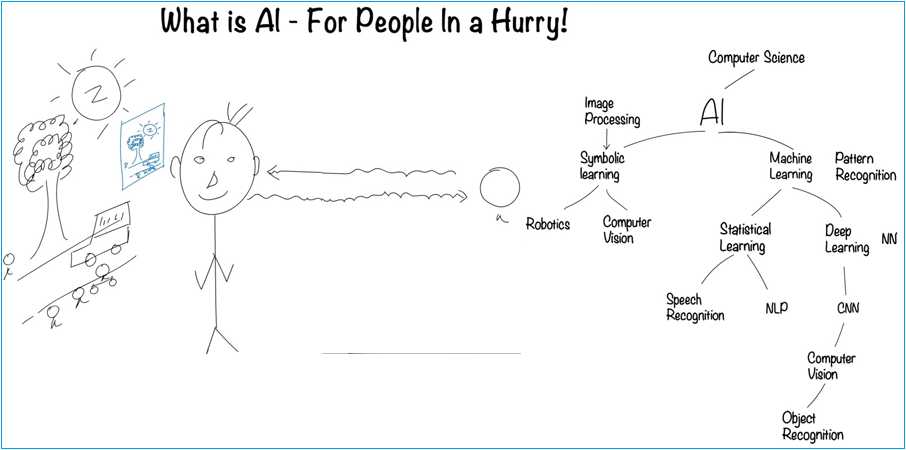

The study was conducted on the Facebook-backed Papers With Code, and analyzed the dataset usage patterns of machine learning subcommunities dedicated to specific tasks (e.g., computer vision, Natural Language Processing, etc.), as well as the distribution of datasets used to benchmark models in those communities and their institutional origins. While this unofficial industry-wide standardization of BMDs may be beneficial for scientific progression, the paper’s authors argue their trending popularity and lack of diversity raises a series of ethical, social, and even political concerns for the development of AI and machine learning (ML). Popular datasets produced by a cadre of elite institutions dominate machine learning research, and the year-over-year trend is toward less diversity, according to a new UCLA and Google Research paperĪs the focus on AI ethics mounts, the datasets we use as a standard to measure the quality of AI training data is coming under increasing scrutiny.Ī new paper from the University of California Los Angeles and Google Research has found that machine learning research is dominated by a handful of popular benchmark datasets (BMDs), most of which originate from a small cadre of elite academic, business, and government institutions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed